Harness Engineering: The Missing Layer in Specs-Driven AI Development

Many teams are still using AI like an intern.

We give instructions, review every output, request fixes, and repeat. It works for quick experiments. It does not work as a long-term delivery model.

As soon as AI becomes part of real software delivery, the intern model starts to crack:

- It does not scale

- It is hard to standardize

- It creates fragile outcomes that depend too much on constant human supervision

The shift we need is not better prompting.

The shift is better engineering.

We need to stop treating AI like an intern and start treating it like a system.

If you have not read it yet, this post builds on my earlier article, Vibe Coding, But Production-Ready: A Specs-Driven Feedback Loop for AI-Assisted Development. That post explains the cycle. This one explains the harness layer that makes the cycle reliable.

I am also using Martin Fowler’s recent article, Harness engineering for coding agent users, as a conceptual reference.

The Shift: In the Loop to On the Loop

In an in-the-loop model:

- Humans validate every output

- Feedback is manual and reactive

- Quality depends on reviewer effort and availability

In an on-the-loop model:

- Humans design the operating conditions

- Validation is automated and continuous

- Feedback loops are built into the system

You are no longer reviewing every answer.

You are designing the conditions for correctness.

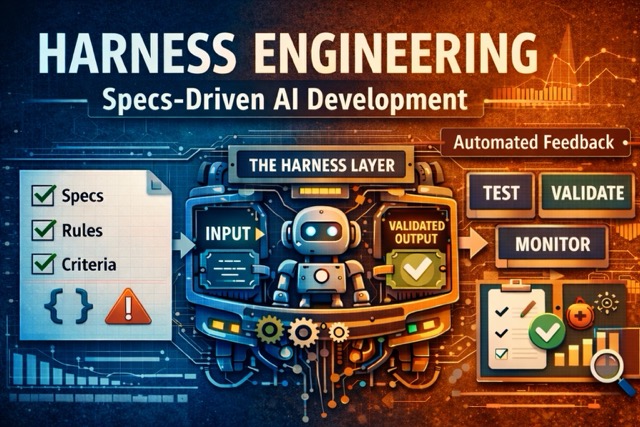

What Harness Engineering Means Here

Harness engineering is the control layer around AI behavior.

A useful definition in this context:

Harness engineering is the practice of designing feedforward guides and feedback sensors so the codebase is continuously regulated toward the desired state defined by specs.

This framing is powerful because it moves the conversation away from “which model should we use?” and toward “which control system do we need?”

In plain terms:

- Guides increase the chance of getting it right on first generation

- Sensors increase the chance of self-correction before human review

Both are required.

A feedback-only setup repeats the same mistakes.

A feedforward-only setup never proves it worked.

Why Specs-Driven Development Matters

Specs-driven development is what makes harness engineering practical. Without it, there is nothing concrete for the harness to enforce.

In software, we do not ship production systems without:

- Requirements

- Contracts

- Tests

AI-enabled systems should follow the same discipline.

Specs-driven AI development means defining upfront:

- Expected outputs

- Constraints (format, language, policy, domain rules)

- Acceptance criteria

- Relevant edge cases

This moves AI from helpful assistant to reliable component.

From Specs to Control System

If we map harness engineering onto the specs-driven cycle from my previous post, this is the core model:

| Specs-driven step | Harness objective | Typical controls |

|---|---|---|

| Product intent | Prevent wrong optimization | Product constraints docs, non-goals, success metrics |

| High-level design | Prevent architecture drift | Architecture rules, module boundaries, dependency constraints |

| Low-level design + acceptance criteria | Prevent ambiguous behavior | Example-based scenarios, contract examples, fixture baselines |

| Implementation | Prevent local code regressions | Type checks, linting, static analysis, focused tests |

| Validation | Prevent integration/runtime surprises | CI gates, contract verification, runtime signals, manual checks |

The key is that the harness is not one tool.

It is the system of controls across the lifecycle.

The Two Pillars of a Harness

1. Feedforward (Before Execution)

Feedforward is the guidance you provide before the model runs:

- Clear instructions

- Structured context

- Few-shot examples

- Output schema expectations

Goal: reduce ambiguity before generation starts.

2. Automated Feedback (After Execution)

Feedback should not depend on humans catching every issue manually.

A stronger model uses automated sensors:

- Unit and integration tests for behavior

- Schema and contract validation

- Business-rule and heuristic checks

- Selective LLM-as-judge where semantic review is useful

If a check fails:

- Retry when failure is transient

- Fix when correction is deterministic

- Escalate when confidence is low or impact is high

That is how the system self-corrects.

Between feedforward and feedback, the harness also handles the runtime layer: tool and function orchestration, retry and fallback strategies, and observability through logs, traces, and eval signals.

Together, these layers are what make AI operable: controllable, testable, and improvable.

Now, what happens when teams skip this?

The Real Risk: Adoption Without Discipline

Teams will use AI regardless of whether a harness is in place. Without one, familiar anti-patterns appear fast:

- Prompt strings treated like specs

- Minimal validation

- Over-trust in first outputs

- Systems that look fine until they fail in production

And as AI accelerates code generation, the gap widens. Delivery speed increases, but confidence does not:

- Reviewers become bottlenecks

- Hidden drift accumulates faster

- Teams pay quality debt later with larger rollback costs

A fair concern from leaders is that not every team is ready for this. The key issue is not junior capability. It is operating model.

This approach requires teams to think differently:

- Define correctness before execution

- Build feedback loops, not just prompts

- Engineer for failure handling, not ideal paths only

These are learnable skills, but they need structure and coaching.

Harness engineering is how specs-driven teams avoid the discipline gap. It externalizes team expertise into explicit controls, so quality is not dependent on one senior engineer noticing everything in review.

A Minimal Starting Harness for Specs-Driven Java Teams

You do not need a giant framework to start.

Start with one topology, for example a CRUD Spring Boot service, and build a repeatable harness template.

Step 1: Define the Spec Pack

- Problem statement

- Non-goals

- Acceptance examples

- API or event contract examples

- Operational constraints (latency, logging, security)

For example, a spec pack for a POST /customers endpoint might look like:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

# spec-pack/create-customer.yml

problem: Create a new customer record with validated contact info

non_goals:

- Deduplication logic (handled by a separate service)

acceptance_examples:

- input: { name: "John Doe", email: "john@example.com" }

expected_status: 201

expected_body_contains: ["id", "createdAt"]

- input: { name: "", email: "invalid" }

expected_status: 400

expected_errors: ["name is required", "email format is invalid"]

contract: openapi/customers.yml#CreateCustomer

constraints:

max_latency_ms: 200

logging: structured_json

auth: bearer_token

This single file gives the agent clear boundaries, the test suite clear targets, and the reviewer a clear checklist.

Step 2: Define Feedforward Guides

- Coding conventions

- Architectural boundaries

- “How to implement” workflow

- “How to test” workflow

Step 3: Define Feedback Sensors

- Build and type checks

- Fast tests

- Structural rules

- Contract verification

- One semantic review step for high-risk changes

Step 4: Close the Steering Loop

When an issue repeats, do not only fix code.

Improve the harness:

- Add a rule

- Add a sensor

- Improve a skill

- Improve a spec example

This is where harness engineering becomes an ongoing engineering practice, not a one-time configuration.

Once the harness exists, the next question is how to roll it out across teams.

How to Help Teams Succeed

Make the Right Way the Easy Way

Do not rely on best-practice docs alone.

Encode standards directly:

- Reusable templates

- Built-in schema checks

- CI validation gates

- Default retry and escalation behavior

If the harness is optional, it will be skipped.

Connect to Known Engineering Patterns

Reduce cognitive load by mapping AI concepts to familiar ones:

- Prompt contract to function input contract

- Output validation to test assertions

- Retry policy to error-handling strategy

- Evaluation set to test fixture mindset

Upgrade Review Questions

Instead of asking “Is this a good prompt?”, ask:

- What defines correctness here?

- Which sensor validates this?

- What is the failure path?

- When do we escalate?

This reframes AI work as engineering work.

What to Stop Doing

If you are moving to specs-driven AI development, these habits are expensive:

- Treating a single prompt as a “spec”

- Assuming green tests mean behavior is correct

- Letting architecture rules live only in tribal knowledge

- Running expensive checks everywhere without cost strategy

- Fixing repeated failures without updating harness controls

These patterns keep teams in reactive mode.

A Better Mental Model

A useful shorthand:

- Specs define the target state

- Harness defines the control system

- Agents execute changes inside that control system

- Humans steer and improve the system over time

This is how teams reduce supervision load without reducing quality.

Not by trusting the model more.

By engineering the harness better.

Final Thought

Prompting is the entry point. It is not the operating model.

The real leverage comes from building systems where AI behavior is guided, validated, and continuously improved.

That is the move: from intern to system.